Switching to Deployer for Zero-Downtime-Deployments

Dear readers,

As we are progressing with the refactoring of foodsharing.de, we are creating the need for more management tasks to be executed at deploy time. For now, these are:

- Invalidating template cache

- Invalidating Dependency Injection container cache

- Install/update external php packages via composer

This is leading to a more and more inacceptable behaviour:

During the deployment, users are continuing to use the site. While dependencies are updated, they might be unavailable for a very short time. Any request during that time is likely to fail.

Also, removing the caches sometimes showed that in the very short time a rm -r takes some parts have already been recreated and rm gave a warning about non-empty directories. This is just an optical issue in our case, though.

So, solutions? Yep.

Introducing: Atomic deployment

The strategy is easy: Instead of updating the running code, just update a second copy, prepare it, then atomically exchange the two copies.

Fortunately, there are tools for this. We have a choice:

- Capistrano: Father of server management tools with atomic deploy capabilities.

- Ansistrano: Atomic deployment for the Ansible server management tool

- Deployer Deployment tool for and in PHP

While we are already using ansible to manage a server for other projects, we are not using it at all for foodsharing yet. So I decided to go for deployer, which has more than 5000 stars on GitHub.

It was actually really easy to configure for our task, even so easy, that I feel you are ready to see most of the configuration file:

set('application', 'foodsharing');

set('repository', 'git@gitlab.com:foodsharing-dev/foodsharing.git');

// Needs to be false when we run in CI environment

set('git_tty', false);

// Shared files/dirs between deployments to be kept when updating.

set('shared_files', ['config.inc.prod.php']);

set('shared_dirs', ['images', 'data', 'tmp']);

// Writable dirs by web server/PHP. We handle the other shared one directly in filesystem so the deploy user does not have access to them.

set('writable_dirs', ['tmp', 'cache/searchindex']);

set('http_user', 'www-data');

// Delete the following on a deployment

set('clear_paths', ['tmp/.views-cache', 'tmp/di-cache.php']);

host('beta')

->hostname('banana.foodsharing.de')

->user('deploy')

->set('deploy_path', '~/beta-deploy');

host('production')

->hostname('banana.foodsharing.de')

->user('deploy')

->set('deploy_path', '~/production-deploy');

desc('Create the revision information');

task('deploy:create_revision', './scripts/generate-revision.sh');

Additionally to that, we have shared data between production and beta deployment, as users should be able to see profile pictures, foodbasket images, email attachments. We handle this by storing this data in a third folder and symlinking from the shared folder.

The following structure is enforced by the default tasks of deployer:

deploy@banana:~/beta-deploy$ ls -alh

insgesamt 20K

drwxr-xr-x 5 deploy deploy 4,0K Mär 4 01:03 .

drwxr-xr-x 8 deploy deploy 4,0K Mär 4 09:52 ..

lrwxrwxrwx 1 deploy deploy 11 Mär 4 01:03 current -> releases/10

drwxr-xr-x 2 deploy deploy 4,0K Mär 4 01:03 .dep

drwxr-xr-x 7 deploy deploy 4,0K Mär 4 01:03 releases

drwxr-xr-x 3 deploy deploy 4,0K Mär 2 22:00 shared

And with the symlinked real data folders in shared it looks like this:

deploy@banana:~/beta-deploy$ ls -alh shared

insgesamt 20K

drwxr-xr-x 3 deploy deploy 4,0K Mär 2 22:00 .

drwxr-xr-x 5 deploy deploy 4,0K Mär 4 01:03 ..

-rw--w--w- 1 www-data www-data 1,8K Mär 4 01:03 config.inc.prod.php

lrwxrwxrwx 1 deploy deploy 26 Mär 2 12:37 data -> /var/foodsharing/data

lrwxrwxrwx 1 deploy deploy 28 Mär 2 13:02 images -> /var/foodsharing/images

drwxrwxr-x+ 3 deploy deploy 4,0K Mär 4 01:10 tmp

deploy@banana:~/beta-deploy$

This shows how atomic deployments work:

- For each deployment, create a completely new version of the software inside

releases/in a folder with a new number - After sucessfully preparing the deployment, e.g. processing it according to the configuration, exchange the symlink of

currentto point to the new release

As switching a symlink is an atomic operation, the state of the deployed code would always be valid to the outside.

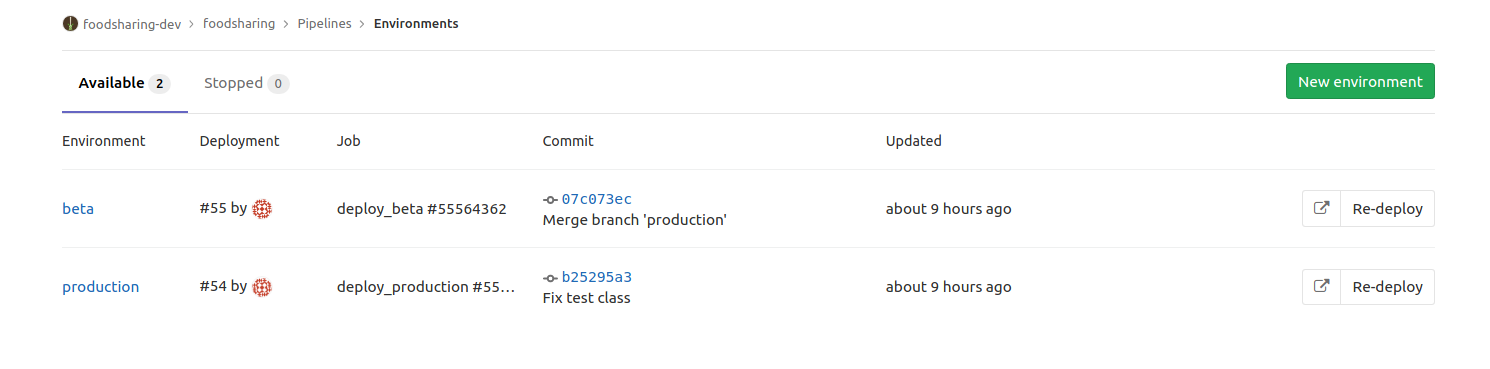

Integrating deployer into the Gitlab CI works nicely. As we already started to use environments, it shows us nicely the deployment status:

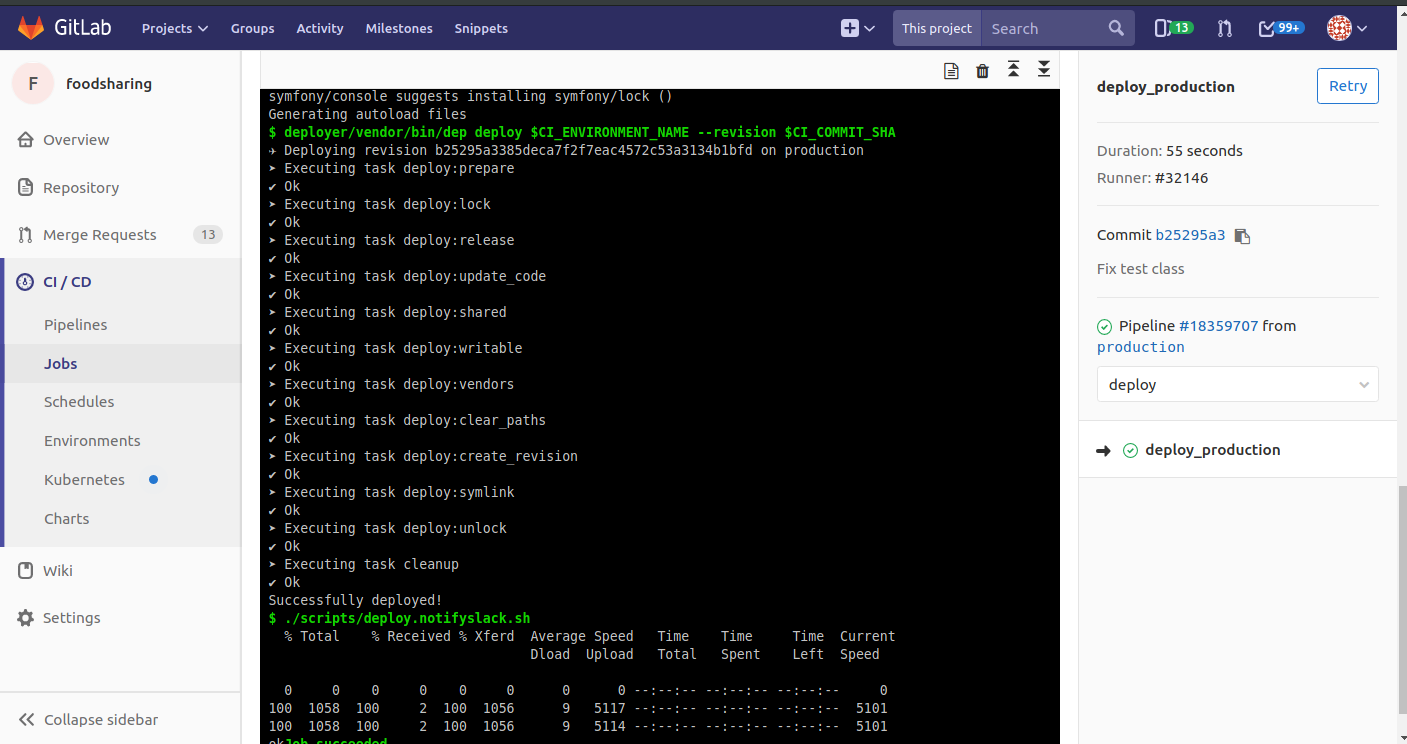

And the CI pipeline output for the deployer run - actually not very interesting when nothing failed:

All done? No…

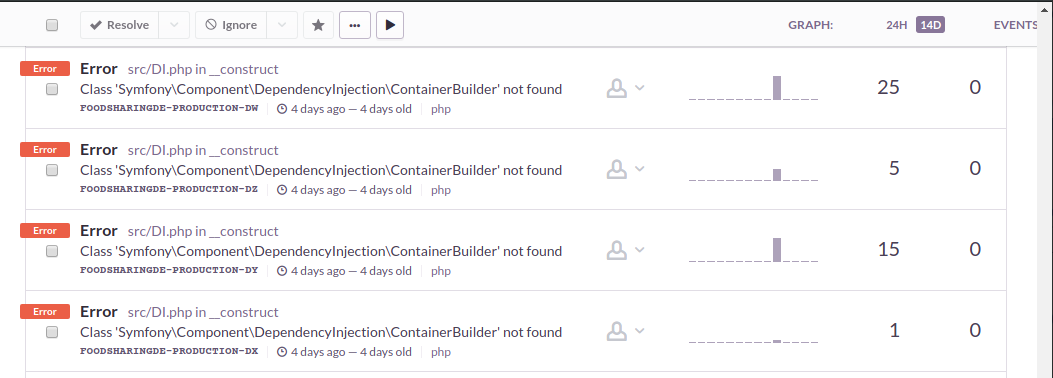

After setting this up, it all seemed fine. Then yesterday came: We had an annoying regression. I already knew that there was something not so good going on when I saw ~1000 errors per day in a codepath that seems not too important but important enough to be looked at soon.

What failed?

Call:

$this->bellGateway->addBell( $params , $closable = false);

bellGateway::addBell:

$bid = $this->db->insert( 'fs_bell', [ 'params' => $params, 'closeable' => $closeable ]);

This is a recently refactored codepath, now using the newer PDO layer for database access.

$bid was zero in one special API call under special user circumstances… exactly those, who lead as the only one to $closable being set to false.

Unfortunately, I had to dig for almost three hours (being quite desparated after one hour) to be able to find out the conditions I need to meet for reproduction. I wrote unit tests and acceptance tests but missed the small $closable = false, though could never reproduce it locally but only in production.

When I finally managed to reproduce, the rest was fast: Our database access library used PDO::PARAM_BOOL to bind boolean parameters. Unfortunately, PHP people don’t really manage to get platform independent libraries right: MySQL, our database, does not support binding booleans. So what should a good library do?

Just fail silently and don’t execute the query

Obvious, isn’t it?

There is a Bug open about this in the PDO Bugtracker since 2006!

So be aware of PDO, MySQL and PDO::PARAM_BOOL. But that is not all, only helped me to discover another thing in the morning:

I fixed the bug and deployed to production. Still, I had some errors logged in the morning regarding this issue! What happened?

We use PHP with OpCode Caching. The PHP OpCode cache identifies a cache entry by its filename, file size, date of last modification and eventually a file hash.

Unfortunately, the process of a cache lookup does not re-evaluate the symlink of the filepath, so it does not notice any updates to deployed code because the old release is still valid.

Easy solution:

Nginx, the webserver used in this configuration, can fully resolve all symlinks before it passes the request to PHP.

Just exchange $document_root by $realpath_root in your fastcgi parameters file (or the appropriate PHP location section if it appears there).

As the opcode cache lookup is indexed by the original file requested, this works as long as each new release always ends up in a new release folder.

Et voila, everything is fine now :-)